What It Is and Why Every Enterprise Should Care in 2026

The Sovereignty Wake-Up Call

Imagine this: a mid-sized law firm in Amsterdam adopts a popular AI assistant to speed up contract review. The tool works beautifully. Response times drop, associates handle more matters, and clients are impressed. Six months in, a partner asks a simple question: where exactly is our client data being processed? The answer, it turns out, isn't straightforward, and the implications run deeper than anyone expected.

This scenario is playing out across Europe right now, in legal practices, financial institutions, healthcare organisations, and government agencies. The AI tools enterprises have adopted at pace over the past two years are overwhelmingly built on infrastructure controlled by a handful of US technology companies. And in 2026, that dependency is colliding head-on with a wave of regulatory enforcement, geopolitical tension, and rising client expectations around data control.

The numbers tell the story. According to Deloitte, over $100 billion is expected to be invested in sovereign AI compute in 2026 alone. McKinsey estimates that 30 to 40% of all AI spending could be influenced by sovereignty requirements, representing a market of $500 to $600 billion globally by 2030. Forrester predicts that 2026 is the year governments start choosing domestic-first approaches to AI procurement. And the EU AI Act, the world’s first comprehensive AI regulation, reaches a critical enforcement milestone on 2 August 2026, when the majority of its rules become operational.

AI sovereignty isn't a niche policy debate anymore. It's a board-level strategic issue. And if your enterprise hasn't started thinking about it, you’re already behind.

What Is AI Sovereignty?

At its core, AI sovereignty is about control. It’s the ability of an organisation, or a nation, to develop, deploy, and govern its AI systems in alignment with its own rules, security requirements, and values. McKinsey’s research defines it across four dimensions: territorial (where data and compute physically reside), operational (who manages and secures them), technological (who owns the underlying stack and intellectual property), and legal (which jurisdiction governs access and compliance).

It’s worth distinguishing AI sovereignty from a few related concepts that often get tangled together. Data sovereignty focuses narrowly on where data is stored, processed, and which legal jurisdiction applies to it. Data residency is even more specific, it’s about the physical location of servers. AI sovereignty goes further, encompassing not just the data but the models themselves, the infrastructure they run on, and the governance frameworks that shape how they’re used.

IBM draws a useful distinction between AI sovereignty and sovereign AI. AI sovereignty refers to the broader capacity for control, the authority to determine how AI systems are used, who operates them, and whether they comply with local rules. Sovereign AI is the infrastructure and technical capability that makes that control possible: the data centres, the GPUs, the models built and trained within jurisdictional boundaries. Think of sovereign AI as the foundation, and AI sovereignty as the house you build on it.

Importantly, sovereignty is not about rejecting AI or retreating into isolation. It’s about informed control. As McKinsey’s analysts put it, not all AI workloads require strict sovereignty, the smart approach is to segment your portfolio, using public models for generic tasks and reserving sovereign infrastructure for high-value intellectual property and sensitive data.

The Three Pillars of AI Sovereignty

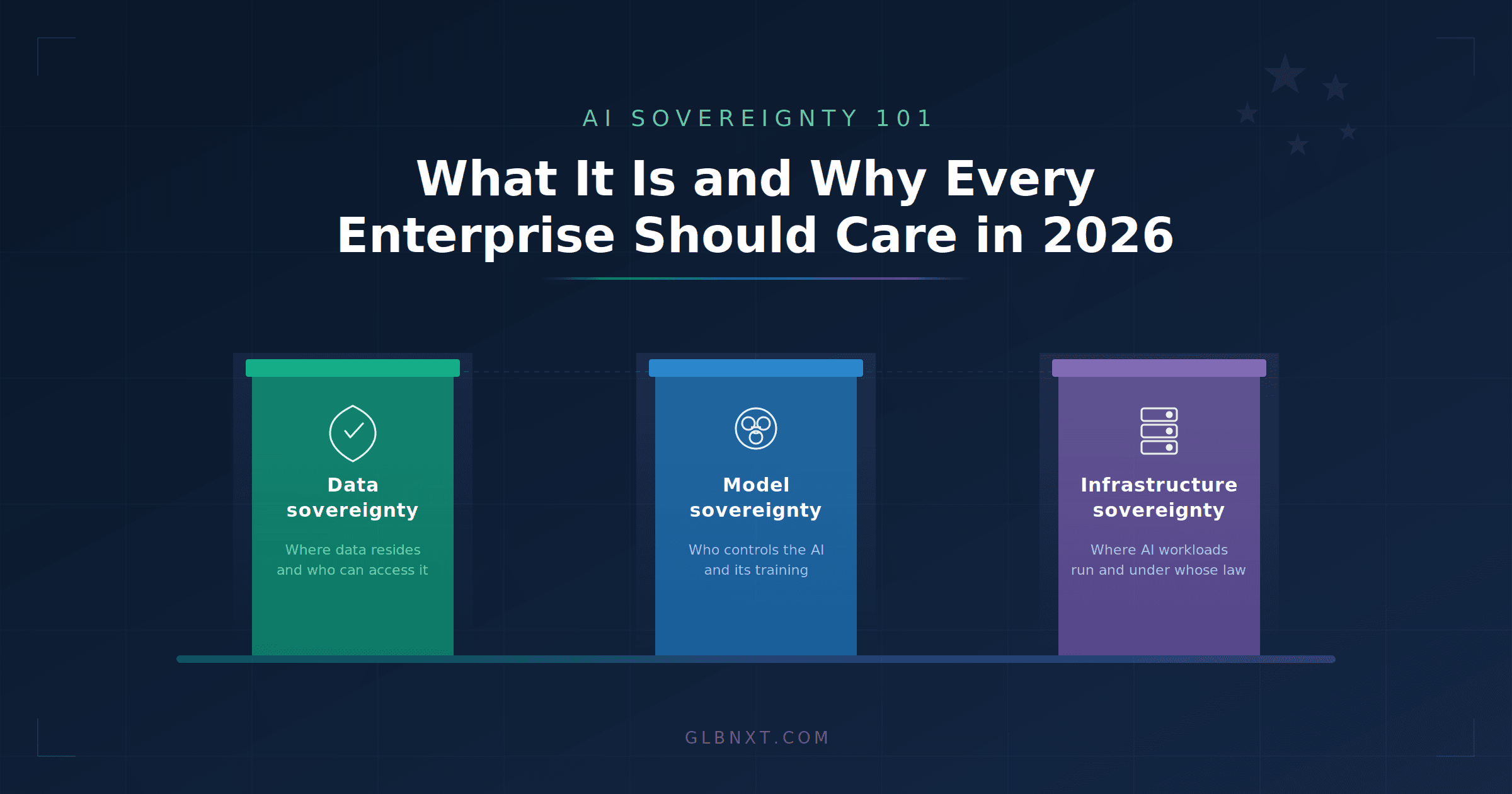

To make sovereignty practical rather than abstract, it helps to think in terms of three interconnected pillars: data sovereignty, model sovereignty, and infrastructure sovereignty. Each addresses a different dimension of control, and weaknesses in any one can undermine the others.

Data Sovereignty

This is the most familiar pillar, and for good reason, it’s where regulatory frameworks like GDPR have focused most of their attention. Data sovereignty means having control over where your data is stored, how it’s processed, and, crucially, who has jurisdictional access to it. It sounds straightforward, but in the world of cloud-based AI, it’s anything but. When an enterprise uses a US-headquartered AI provider, the data may physically reside in an EU data centre, yet still be legally accessible to US authorities under extraterritorial legislation. We’ll return to this tension shortly.

Model Sovereignty

This pillar asks: who controls the AI models your enterprise depends on? Who decides how they’re trained, what data they’re fine-tuned on, and what outputs they produce? In most current enterprise AI deployments, the answer is a third-party provider. That creates dependencies that go beyond data, it means your organisation’s AI capabilities are shaped by someone else’s decisions about model architecture, training data, and update cycles. Model sovereignty means retaining the ability to choose, swap, or fine-tune models without being locked into a single vendor’s ecosystem.

Infrastructure Sovereignty

The third pillar concerns the physical and operational layer: where AI workloads actually run, who operates that infrastructure, and which legal jurisdiction governs it. This is where the distinction between “sovereign-washed” cloud services and genuine sovereignty becomes critical. An EU-branded data centre operated by a US parent company may look sovereign on the surface, but if the parent entity is subject to US law, the sovereignty is, as one German CEO described it, an illusion.

Why Sovereignty Matters Now, The 2026 Context

Sovereignty concerns aren’t new, but several forces are converging in 2026 that make this the year enterprises can no longer defer action.

The Regulatory Push

The EU AI Act reaches its most significant enforcement date on 2 August 2026. On that date, the majority of the Act’s provisions become operational, including rules for high-risk AI systems, transparency obligations under Article 50, requirements for AI regulatory sandboxes in every member state, and the start of formal enforcement at both national and EU level. For enterprises using AI in fields like legal practice, healthcare, finance, or public services, this isn’t theoretical, it’s a compliance deadline with real consequences, including fines that can reach up to €35 million or 7% of global annual turnover for prohibited AI practices.

The AI Act doesn’t exist in isolation, either. It sits alongside an increasingly muscular GDPR enforcement regime, the EU Data Act (which took effect in September 2025 and imposes new switching and interoperability requirements on cloud providers), and sector-specific guidance from professional bodies. In the legal sector, for instance, both the Council of Bars and Law Societies of Europe (CCBE) and the Dutch Bar Association (NOvA) have published recommendations on AI use that explicitly flag data sovereignty and confidentiality risks. South Korea passed comprehensive AI regulations in January 2026. Globally, the trend is unmistakable: regulation is tightening, and it’s sovereignty-aware.

The Geopolitical Dimension

The US CLOUD Act, enacted in 2018, grants US authorities the power to compel American companies to hand over data regardless of where that data is physically stored. This directly conflicts with GDPR Article 48, which states that foreign court orders requesting data transfers are only valid when grounded in an international agreement. The result is an irreconcilable legal tension: organisations using US-headquartered providers face a situation where compliance with one jurisdiction’s law means violating another’s.

This isn’t hypothetical. In late 2025, the American IT services company Kyndryl announced its intention to acquire Solvinity, a Dutch managed cloud provider. Several Dutch government clients, including the municipality of Amsterdam and the Ministry of Justice and Security, had specifically chosen Solvinity to reduce their dependence on American firms and mitigate CLOUD Act risks. Solvinity manages critical national infrastructure, including the Netherlands’ citizen authentication system. The acquisition places these systems squarely within the potential reach of US authorities. It was, as multiple reports described it, an unpleasant surprise.

Meanwhile, even Europe’s own institutions are taking action. The International Criminal Court in The Hague replaced its Microsoft office software with a European open-source alternative in late 2025, prompted in part by an incident in which its chief prosecutor was reportedly locked out of his Outlook email account under US political pressure.

The Concentration Risk

Roughly two-thirds of Europe’s cloud infrastructure market is controlled by three American companies: Amazon Web Services, Microsoft Azure, and Google Cloud. Some analysts put the broader figure even higher, the Eurostack Foundation estimates that 90% of Europe’s digital infrastructure is controlled by non-European, predominantly American companies. This isn’t just a sovereignty concern; it’s a concentration risk. When critical business operations depend on a small number of foreign providers, any geopolitical disruption, regulatory shift, or commercial decision by those providers can have outsized consequences.

The “sovereign cloud” offerings from US hyperscalers, AWS’s European Sovereign Cloud, Microsoft’s Azure sovereign regions, Google’s distributed cloud, have been met with scepticism by legal experts. The core issue remains: as long as the parent company is a US entity, it’s subject to US law. Location of data becomes, as one tech law expert noted, irrelevant in the applicability of US laws.

What Is at Stake for Enterprises

The risks of ignoring sovereignty are concrete and mounting. For regulated industries in particular, this isn’t just about compliance checklists, it’s about the viability of AI adoption itself.

Regulatory exposure is the most obvious risk. With the EU AI Act now enforcing, and GDPR fines continuing to climb, enterprises that can’t demonstrate control over their AI data flows face significant financial and legal liability. But the risks extend well beyond fines. In professional services, particularly legal practice, a breach of client confidentiality doesn’t just attract a penalty; it can end careers and destroy firms. The CCBE has explicitly warned that data entered into AI tools may be stored and reused by providers for training purposes, creating a confidentiality risk that strikes at the heart of the lawyer-client relationship.

Vendor lock-in presents another strategic risk. Enterprises deeply embedded in a single provider’s ecosystem face limited transparency, restricted portability, and the real possibility that commercial or geopolitical decisions outside their control will reshape their AI capabilities overnight. The EU Data Act’s new switching requirements are designed to address this, but regulatory remedies take time, and in the interim, the dependency is real.

Perhaps most importantly, client expectations are shifting. In sectors built on trust, legal, financial, healthcare, clients are increasingly asking where and how their data is processed. Enterprises that can answer those questions clearly and credibly will earn a competitive advantage. Those that can’t will find themselves losing business to competitors who can.

What Sovereign AI Looks Like in Practice

Sovereign AI isn’t a single product or certification, it’s an approach to building and deploying AI that embeds control at every layer. In practice, that means several things working together.

It starts with EU-hosted infrastructure that’s genuinely free from extraterritorial legal exposure. That means the infrastructure provider itself, not just the data centre, is headquartered and legally domiciled within the EU. This distinction matters enormously: a German data centre operated by an American parent company does not deliver true sovereignty, regardless of what the marketing materials say.

It means transparent data processing agreements that make clear where data goes, who can access it, and, critically, that client data is never used to train or refine the AI models. This “zero training on client data” commitment is particularly important in professional services, where the CCBE identifies model training on client inputs as a primary confidentiality risk.

It means model flexibility, the ability to choose, swap, or fine-tune AI models without being locked into a single vendor’s proprietary ecosystem. Open architectures and interoperable standards (like the Model Context Protocol, which is gaining traction as an industry standard for connecting AI systems to external tools) reduce dependency and future-proof investment.

And it means compliance by design rather than compliance as afterthought. The most effective sovereign AI solutions don’t bolt privacy and governance onto existing platforms, they’re architected from the ground up with regulatory obligations as first-order requirements. This is the difference between a solution that happens to be GDPR-compliant and one where compliance is technically enforced in the architecture itself.

Getting Started, A Practical Roadmap

If sovereignty feels like a large and complex undertaking, that’s because it is. McKinsey’s research shows that sovereign cloud and AI migrations typically take three to four years, not because of technology limitations, but because of the organisational work involved in moving regulated workloads. The good news is that you don’t need to solve everything at once. A phased approach works well.

First, audit your current AI stack. Map where your data flows when you use AI tools. Identify which providers are US-headquartered and therefore potentially subject to the CLOUD Act. Understand which of your AI use cases involve sensitive data, client information, employee records, proprietary IP, and which are genuinely low-risk.

Second, map your regulatory obligations. GDPR is the baseline, but sector-specific rules matter enormously. If you’re in legal practice, the NOvA and CCBE recommendations should shape your AI policy. If you’re in finance, the EU AI Act’s high-risk classifications and DORA requirements apply. If you’re in healthcare, additional data protection layers come into play. Don’t just think about what’s required today, consider what’s coming by August 2026 and beyond.

Third, evaluate your providers against sovereignty criteria. Ask hard questions: Where is the provider headquartered? Is it subject to extraterritorial legislation? Is client data used for model training? Can you switch providers without losing your data or workflows? Is compliance contractually promised, or technically enforced? The answers will tell you a lot about whether your current setup is genuinely sovereign or merely sovereign-washed.

Fourth, start small. Pilot sovereign AI in your most sensitive workflows first, the ones involving client confidentiality, regulated data, or high-value IP. This lets you build confidence and internal expertise without attempting a wholesale migration. McKinsey’s research supports this phased approach, noting that leading enterprises segment their workloads, reserving sovereign infrastructure for what truly needs it.

Fifth, build internal literacy. Sovereignty isn’t just an IT decision, it touches legal, compliance, procurement, and executive leadership. The Fast Company perspective captures this well: governance succeeds when it reflects the reality that employees will experiment with AI regardless of policy, and provides approved, enterprise-grade environments where teams can adopt new tools safely.

Sovereignty as Competitive Advantage

It’s tempting to frame AI sovereignty as a cost centre or compliance burden, one more thing to worry about in an already complex regulatory environment. But that framing misses the strategic opportunity.

Enterprises that move early on sovereignty are building something their competitors can’t easily replicate: trust. In regulated industries especially, the ability to tell clients exactly where their data goes, to demonstrate that AI outputs are governed by EU law, and to prove that confidential information never leaves a controlled environment, that’s not a checkbox exercise. It’s a genuine differentiator.

McKinsey estimates that sovereign AI could represent a €480 billion GDP uplift for Europe alone, with regulated industries positioned to profit first because they could finally deploy AI use cases at scale with the right sovereign setup. Deloitte’s research shows that the 13% of enterprises that have established sovereign, AI-ready foundations are realising up to five times the return on investment compared to their peers.

The question in 2026 isn’t whether your enterprise needs AI sovereignty. It’s whether you can afford to operate without it. The regulatory landscape is clear, the geopolitical risks are documented, and the competitive dynamics are shifting. Enterprises that build sovereign AI foundations now, thoughtfully, in phases, starting with their most sensitive workloads, will be the ones leading the next wave of trustworthy AI adoption.

Because in the end, technological progress and your professional values shouldn’t be in tension. They should advance together.

About GLBNXT

GLBNXT provides sovereign AI solutions built for regulated industries. Our platform is 100% EU-hosted, GDPR-compliant by design, and built with zero training on client data. We work with legal practices, government advisory firms, and enterprises that need AI they can trust. To learn more or schedule a demonstration, visit www.glbnxt.com.

Key Sources

McKinsey, “Sovereign AI ecosystems for strategic resilience and economic impact,” March 2026

Deloitte, “State of AI in the Enterprise: The Untapped Edge,” via World Economic Forum, January 2026

Deloitte, “A new era of self-reliance: Navigating technology sovereignty,” TMT Predictions 2026

EU AI Act Implementation Timeline (artificialintelligenceact.eu)

European Commission, “AI Act, Shaping Europe’s digital future,” 2026

IBM, “What is AI Sovereignty?,” February 2026

Computer Weekly, “Sovereign cloud and AI services tipped for take-off in 2026”

The Register, “Europe gets serious about cutting US digital umbilical cord,” December 2025

Fast Company, “Sovereign AI is reshaping enterprise responsibility,” March 2026

CIO.com, “Building sovereignty at speed in 2026,” December 2025

CCBE Guide on the Use of Generative AI by Lawyers, October 2025

NOvA Recommendations on AI in Legal Practice, 2025